Someone whose job it is to ask uncomfortable questions about risk put one to me recently that deserves a longer answer than I gave at the time. She's not a technologist, but she has the kind of mind that cuts straight to the structural risk — and she's not afraid to put a financial lens on things everyone else is treating as purely technical.

If AI agents become core infrastructure — if they're woven into how your organisation operates, how your people make decisions, how your systems talk to each other — what stops the providers from charging whatever they want?

It's the Salesforce playbook. You adopt the platform. You build workflows on it. Your data lives there, your processes depend on it, your people know nothing else. Then the price goes up. And up. And you pay, because the switching cost is higher than the increase.

Every enterprise software buyer has lived this. The fear is that AI providers are next.

It's a reasonable fear. It's also, I think, mostly wrong — for reasons that are structural, not wishful.

I co-lead a company that deploys AI across its operations. The question isn't theoretical for us — we're building on these systems every day, and we need to know whether the ground underneath is stable.

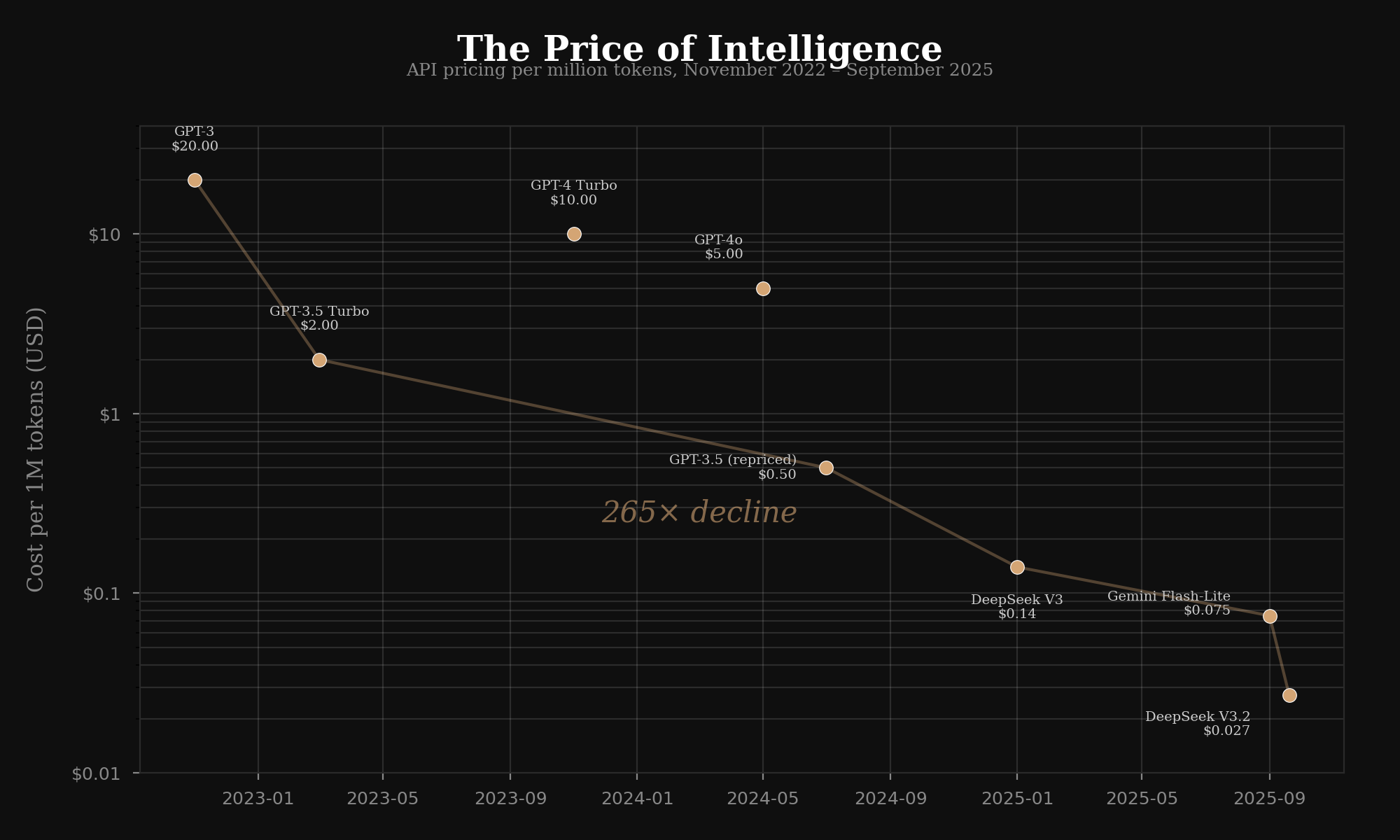

Start with what's already happened to the price of intelligence.

In November 2022, processing a million tokens through GPT-3 cost roughly $20. By late 2025, equivalent capability through Gemini Flash-Lite cost $0.075.

That's a 265x decline in three years. Not a gradual erosion — a collapse.

| Date | Model | Cost per 1M tokens |

|---|---|---|

| Nov 2022 | GPT-3 (text-davinci-003) | $20.00 |

| Mar 2023 | GPT-3.5 Turbo | $2.00 |

| Mid 2024 | GPT-4o mini | $0.15 |

| Late 2025 | Gemini 2.0 Flash-Lite | $0.075 |

The drivers are compounding. Inference optimisation — quantisation, distillation, speculative decoding — has reduced compute costs by 2–4x independently of model improvements. Competition between OpenAI, Anthropic, Google, Meta, DeepSeek, Qwen, and Mistral has made pricing a race to the bottom. And open-source models have closed the gap: DeepSeek V3 matches GPT-4-class models on standard benchmarks at a fraction of the cost.

None of this guarantees prices keep falling. But it makes the Salesforce playbook — one provider, captive customers, rising prices — structurally unlikely for the AI layer.

Here's why the analogy breaks.

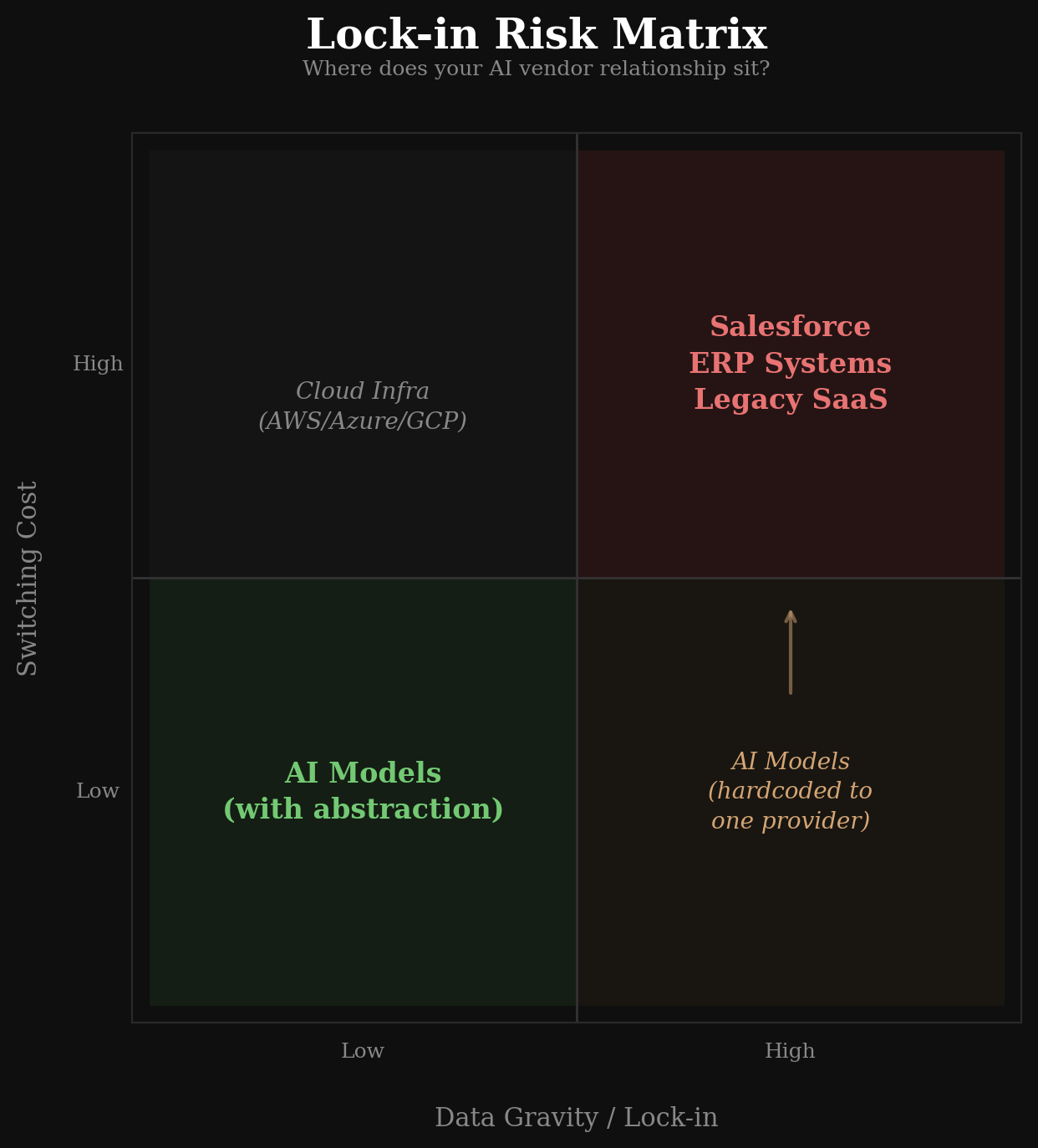

Salesforce's lock-in works because of data gravity — your data, your workflows, and your institutional knowledge are embedded in their platform. Switching means rebuilding everything. The product is the platform.

AI models are different. They're stateless APIs. The interface is standardised — send text in, get text back. Your data never enters the model's permanent memory. Your workflows call the model; they don't run inside it.

And critically, there are now abstraction layers — tools like LiteLLM and OpenRouter — that let you switch between providers with a configuration change, not a migration project.

The lock-in risk in AI isn't the model. It's everything you build around it.

If you hardcode to one provider's API, fine-tune exclusively on their infrastructure, use their proprietary features without abstraction — then yes, you're locked in. But that's an architectural choice, not an inevitability.

The equivalent in the Salesforce world would be if you could swap CRM providers by changing one line in a config file. You can't. With AI models, increasingly, you can.

So if the price of intelligence is falling and the lock-in is avoidable, what should you actually worry about?

Two things.

Energy. This is the one that doesn't get enough attention. AI datacenters are scaling to gigawatt-level campuses. The International Energy Agency (IEA) projects data centre electricity consumption could reach 1,000 terawatt-hours annually — roughly equivalent to Japan's entire electricity consumption. Grid infrastructure isn't keeping pace.

Microsoft has signed a 20-year deal to restart Three Mile Island's Unit 1 reactor. Google has contracted Kairos Power to build a 500-megawatt fleet of advanced nuclear reactors. Amazon is investing $20 billion in data centre infrastructure adjacent to the Susquehanna nuclear plant.

When the tech giants are commissioning their own power supply, that tells you where the real constraint lives.

Token prices follow a deflationary curve. Energy prices don't.

At scale — when you're running hundreds of agents across an organisation — energy costs may matter more than token costs. That's a different kind of infrastructure dependency, and one that's harder to abstract away.

Architectural debt. The decisions you make today about how your agents are built will determine how much leverage you have in three years. This is the part you can control.

The principle is simple: build so you can leave.

That means abstraction layers between your application logic and any single provider. It means storing memory, context, and conversation history in systems you own — not in the provider's platform. It means designing prompts and workflows around capabilities, not around one model's idiosyncrasies. And it means testing against multiple providers regularly, so switching is a decision you can make, not a project you'd have to plan.

Build tightly coupled to one provider and you'll pay whatever they charge, even if their competitors are cheaper. Build with portability from day one and you preserve the optionality that the market is trying to give you.

Her question was really about control. When something becomes load-bearing, who has the power?

The honest answer: it depends on how you build it.

The market dynamics are on your side — prices are falling, competition is fierce, open-source is at parity, and the switching cost is genuinely low if you design for it. But the market can only give you options. Whether you take them is an architecture decision.

The organisations that will feel locked in three years from now are the ones making expedient choices today. The ones that won't are the ones treating provider independence as a first-class requirement — not because the current provider is bad, but because the ability to leave is what keeps the relationship honest.

The ability to leave is what keeps the relationship honest.

That's not a technology insight. It's a governance one.

This essay is about what happens when AI becomes the infrastructure you depend on. In a companion essay, I look at the same question from the other side: what happens when you remove the human infrastructure — the hierarchy, the management layers, the organisational scaffolding — that has been carrying weight you may not see until it's gone.